Bemærk

Adgang til denne side kræver godkendelse. Du kan prøve at logge på eller ændre mapper.

Adgang til denne side kræver godkendelse. Du kan prøve at ændre mapper.

Databricks Lakeflow Connect provides fully-managed connectors for ingesting data from relational databases using change data capture (CDC). Each connector efficiently tracks changes in the source database and applies them incrementally to Delta tables.

Supported connectors

| Connector | Description |

|---|---|

| MySQL | Ingest data from MySQL databases using change data capture (CDC) for efficient incremental loads. |

| PostgreSQL | Ingest data from PostgreSQL databases using change data capture (CDC). |

| Microsoft SQL Server | Ingest data from Microsoft SQL Server using change data capture (CDC) or full snapshot. |

Connector components

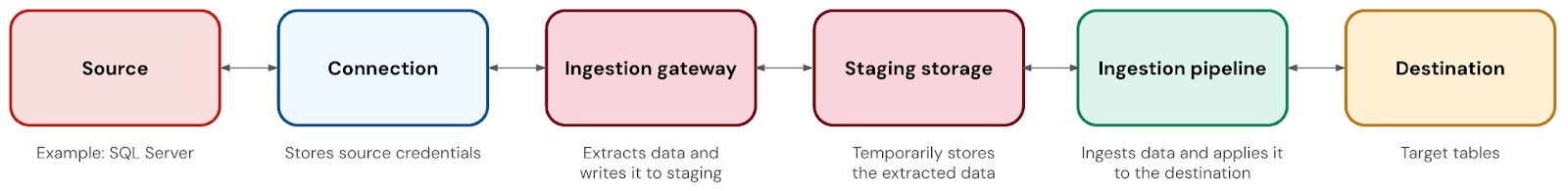

A database connector has the following components:

| Component | Description |

|---|---|

| Connection | A Unity Catalog securable object that stores authentication details for the database. |

| Ingestion gateway | A pipeline that extracts snapshots, change logs, and metadata from the source database. The gateway runs on classic compute, and it runs continuously to capture changes before change logs can be truncated in the source. |

| Staging storage | A Unity Catalog volume that temporarily stores extracted data before it's applied to the destination table. This allows you to run your ingestion pipeline at whatever schedule you'd like, even as the gateway continuously captures changes. It also helps with failure recovery. You automatically create a staging storage volume when you deploy the gateway, and you can customize the catalog and schema where it lives. Data is automatically purged from staging after 30 days. |

| Ingestion pipeline | A pipeline that moves the data from staging storage into the destination tables. The pipeline runs on serverless compute. |

| Destination tables | The tables where the ingestion pipeline writes the data. These are streaming tables, which are Delta tables with extra support for incremental data processing. |

Network connectivity

The ingestion gateway runs on classic compute in your Azure Databricks workspace VPC or VNet, and must be able to reach the source database over the network.

Any network path that allows the gateway to reach the database is supported, including VPN, Azure ExpressRoute, AWS Direct Connect, VPC or VNet peering, and public endpoints.

Cross-cloud connectivity is supported. For example, an Azure Databricks workspace can ingest from an AWS Aurora PostgreSQL database if the appropriate network connectivity exists between the two environments.